|

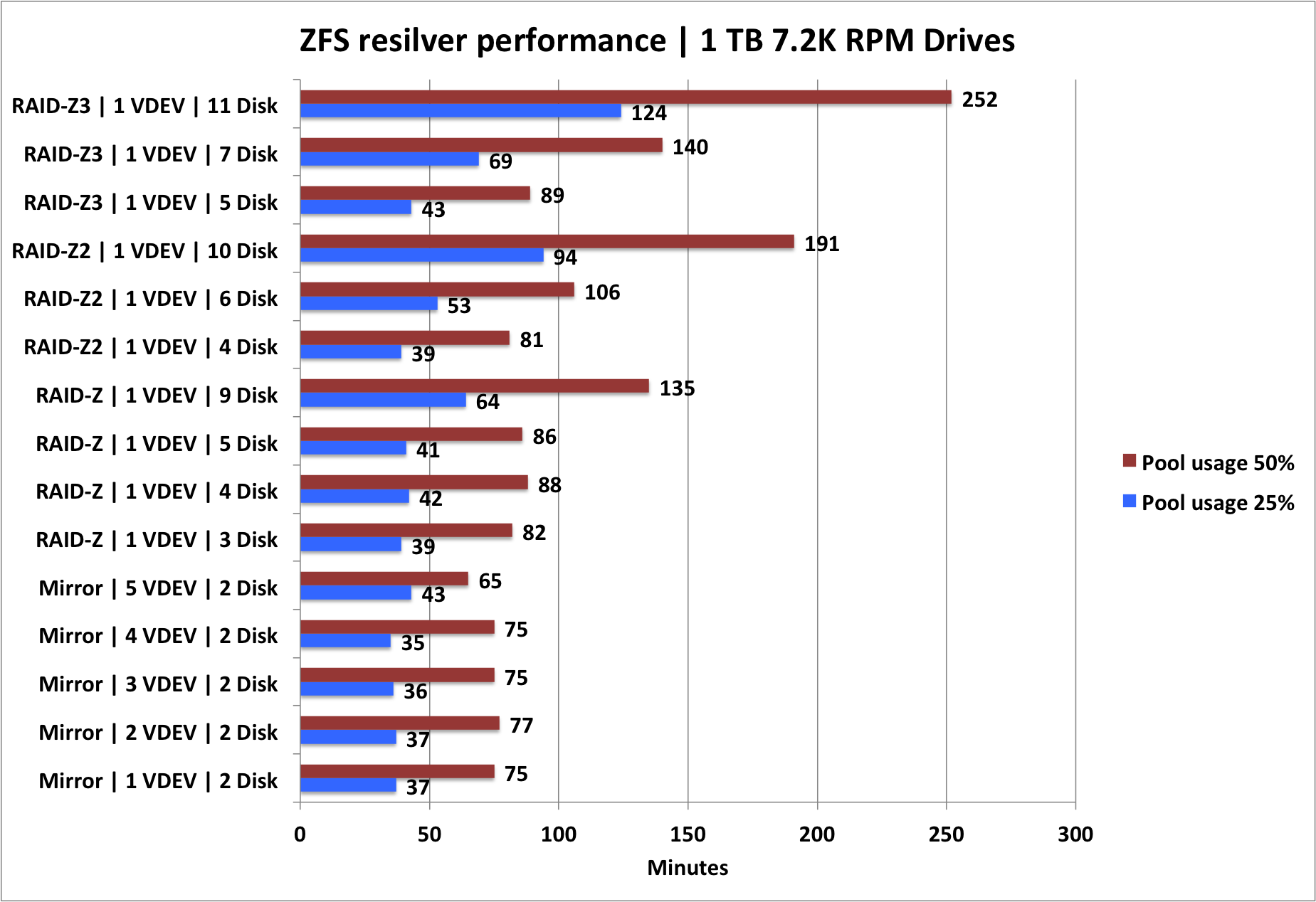

10/29/2022 0 Comments Zfs raid level openzfs  And it does not matter where the parity segment will be, because OpenZFS logical stripe width is dynamic, variable and each a full stripe width. The space allocation map would be able to provide the guidance for this adjustment, now called a Reflow technique. In RAID-Z expansion (box above on the right), the existing stripe distribution is merely adjusted by copying what blocks are needed to be copied. This means that when a new drive (or drives) are added, the data and the parity blocks are likely read, parity recalculated and re-written back to the new stripe width as shown in the box on the left above. In some, there are also dedicated drives for parity. In many parity-based RAID implementations, the stripe widths are fixed. The storage capacity size of the vdev grows but its RAID data to parity ratio does not change for the existing stripes. Only newly written data can be striped over the vdev’s new logical width while existing data stripe width remains. But this will soon be a thing of the past because the early development work on RAID-Z expansion now allows additional drive(s) to be added to a RAID-Z1/2/3 vdev, extending its vdev’s width (but not the stripe width) and retain the RAID-Z level. You can’t attach a drive to a RAID-Z vdevĪs eloquently describes by Matt Ahrens in the FreeBSD Developer Summit June 2021 (Youtube video), RAID-Z expansion was not possible at present. Because the striped width in OpenZFS is not fixed (unlike many other storage array’s RAID implementation), this presents many different permutations of a dRAID vdev, each with different performance, reliability, capacity and resilvering recovery speed profiles. This topology is a logical overlay over the traditional fixed disks vdevs, and the storage admin can define the quantity of data, parity and hot spares sectors per logical dRAID stripe. dRAID is a vdev (virtual device similar to a RAID volume) topology. I have followed OpenZFS dRAID since Isaac Huang’s presentation in the OpenZFS Developer Summit of 2015. IBM® also had distributed RAID provisioned via its SAN Volume Controller as well, and their technology also precedes the OpenZFS dRAID. Garth Gibson‘s work on RAID declustering, a pre-cursor to dRAID. I broached the subject in 2012 in my blog “ 4TB disks – The end of RAID“, but even earlier, Enterprise Storage Forum had an article named “ Can Declustering save RAID Storage Technology?” Both my blog and ESF mentioned Dr. And each vendor has its own proprietary RAID rebuild mechanism, with the prime objective to return the volume to a healthy state as fast as possible and recover the data blocks from the failed disk(s). With the hard disk drives capacity hitting 20TB and beyond soon, many storage vendors already have triple parity RAID as well. Using a handful of hard disk drives to rebuild the volume was too slow (from hours to days to weeks now), further exacerbated by the RAID-6 dual parity configurations. The issue of data loss risks in the time it takes to rebuild or reconstruct (in ZFS terms, resilver) a RAID volume has been a bugbear in the storage industry like forever. They were rare events, and I hope to do them justice just to learn about them. The announcements of both RAID-Z expansion and dRAID within weeks of each other were special. So, when OpenZFS founding developer, and also the co-creator of the ZFS file system, Matt Ahrens announced the review of the RAID-Z expansion feature in OpenZFS in June 2021, there was elation! Within weeks of the RAID-Z expansion announcement, OpenZFS 2.1 was out, and finally, dRAID (distributed RAID) was GAed as well! I went nuts! Double Happiness! For a storage practitioner like me, working with ZFS is that there is always a “I get it!” moment every time, because the beauty is there are both elegances of power and simplicity rolled into one. RAID stripe, mirror, Z1, Z2, Z3) together with a highly reliable file system that scales. Hard Disk Drives, Solid State Drives) and the logical data structures (eg. Unlike traditional volume management, ZFS merges both the physical data storage representations (eg. For the uninformed, ZFS is one of the rarities in the storage industry which combines the volume manager and the file system as one.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed